Project Overview

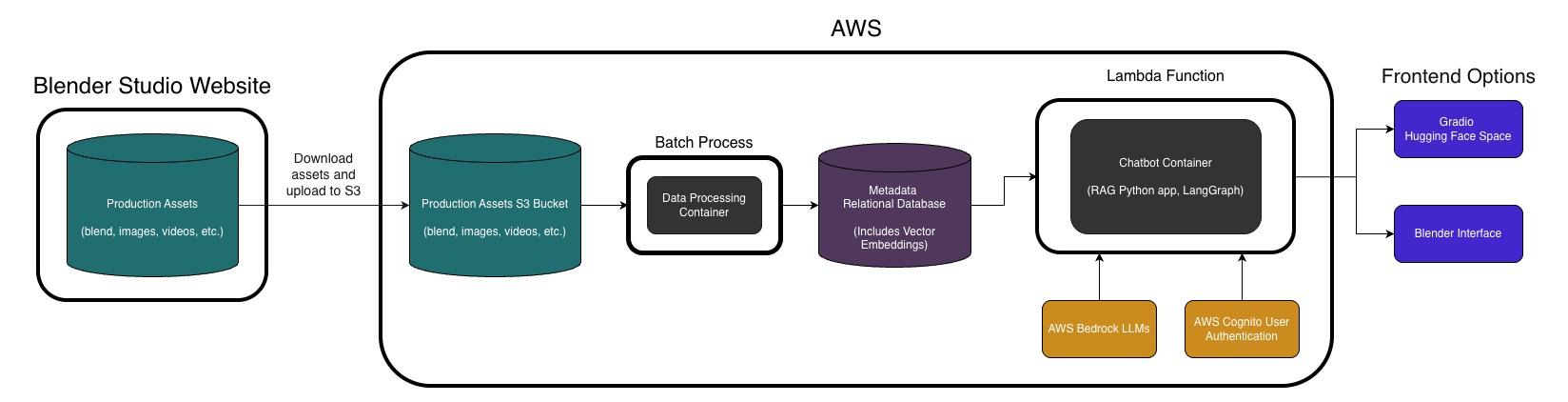

Assistant built with a containerized AWS Lambda backend, langchain, Bedrock models, and PostgreSQL integration. Providing information on a database of CG production assets based on natural language queries.

Development Process

From working at The Third Floor, I was familiar with the importance of being able to quickly get information on a show or track down a specific file you're looking for. This project seemed like something that would be of real value and be a good application of the LLM / RAG techniques.

The first problem to sort out was what data source I would use for the project. Luckily, Blender Studio offers open source assets from all of their short films dating back to 2006. This provided a perfect database that could simulate the sort of production environment that a animation studio would have. Also, using Blender meant it would be easy to install and use in the data processing container, as opposed to a DCC like Maya that would require a license.

System Architecture

Below is a diagram of the system:

Antigravity with Claude Sonnet 4.5 was utilized in the development of this project, and was a great help in coding a system this large in the time frame that I had. It still required a large amount of effort and learning on my part, but allowed me to think bigger picture about the architecture of the system instead of being in the weeds of debugging.

Technical Implementation

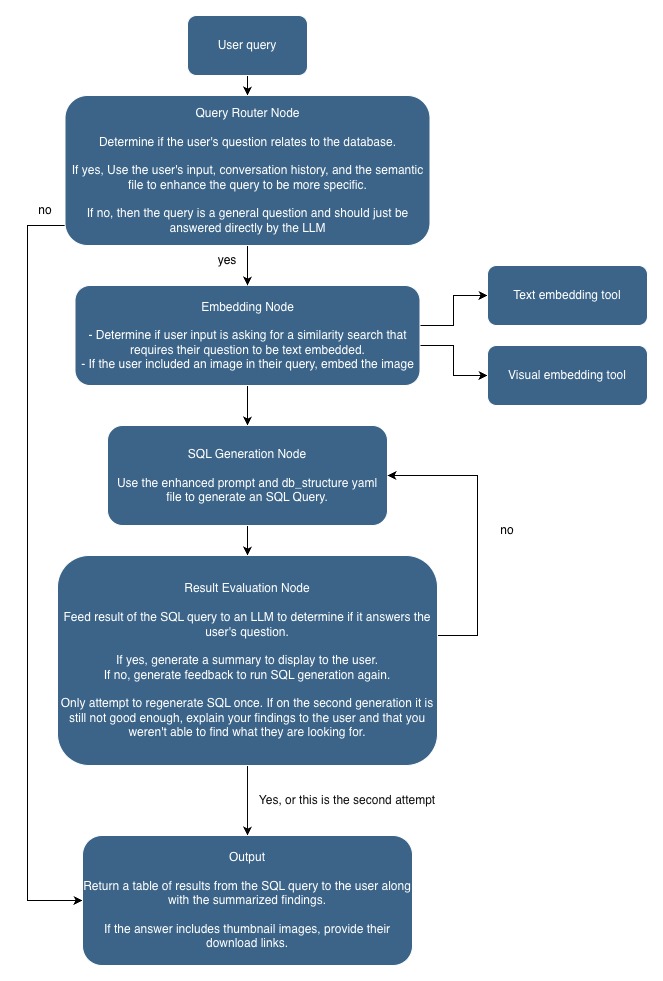

In my machine learning class last semester, I learned about OpenAI's CLIP model that is able to create embeddings for both text and images. This was in the context of using CLIP for text2image diffusion models, but it seemed like a great way to allow users to both enter text descriptions of the file they are looking for, and have the system compare it against thumbnail image embeddings to find similar files. This would also allow the user to upload an image to the assistant to search for similar thumbnail images.

Agent Structure

The chatbot agent itself is built using LangGraph, a library for building agentic systems. It allows for the creation of complex execution flows that can route queries to different processes. This system includes a number nodes to determine the type of query, embed user input, generate SQL, and output the results.

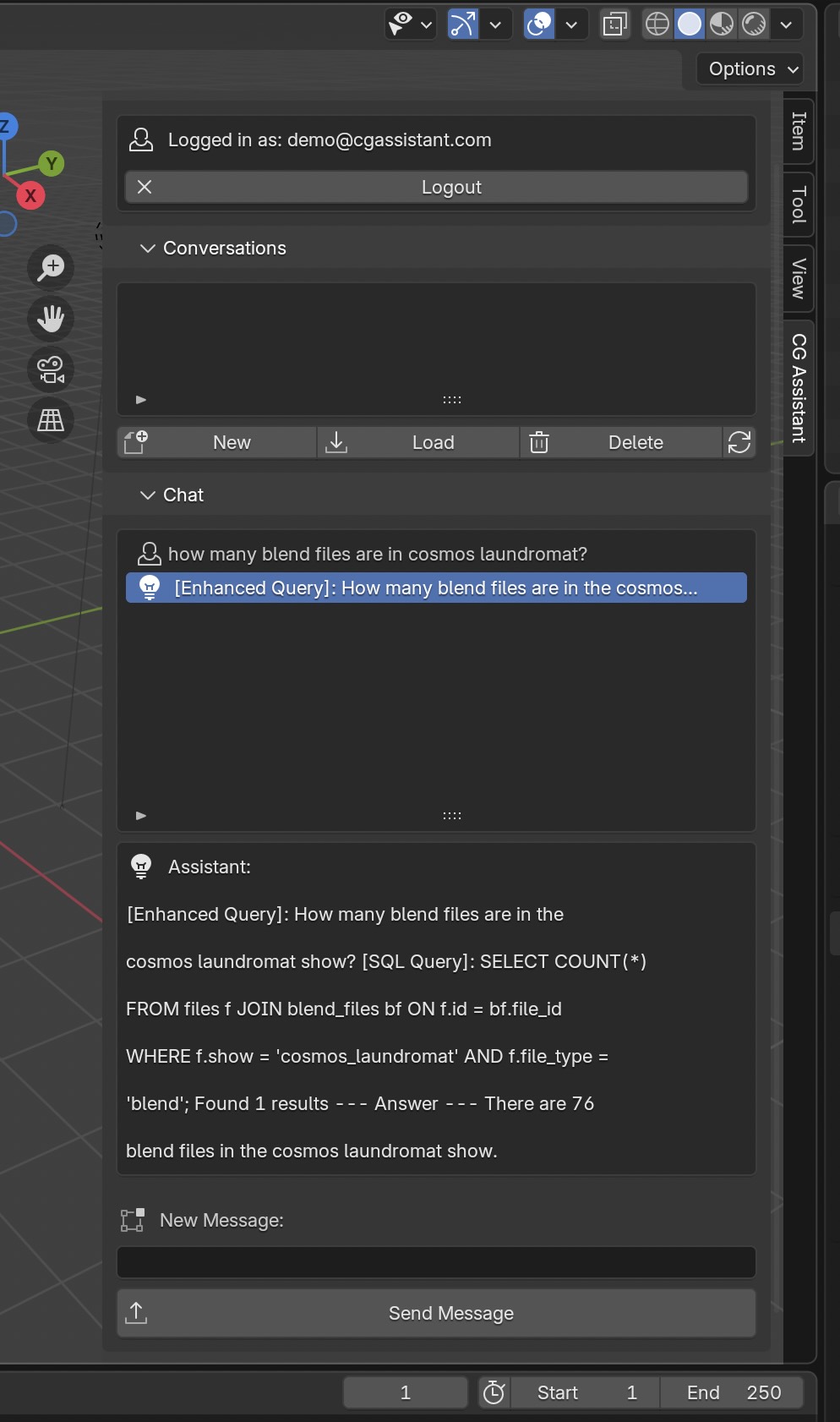

Blender Addon Frontend (Work in Progress)

I am also working on a Blender addon that provides a frontend for the assistant directly in the Blender UI. This has all the same functionality of the gradio frontend, but also allows the user to take a snapshot render of their active viewport to use in the chatbot for similarity search in the database. It will also have functionality to directly download and open blend files from the database. This is still a work in progress and will require some more polishing.

Future Plans

There are a few features that I'd like to implement in the future that I haven't been able to get to yet.

- It should be possible to either train a custom ML model or find an existing one that can classify the shot type of film stills like Close up, Medium Shot, etc. Then this could be added as part of the metadata tags to allow for search based on these parameters.

- To have a UI for the chatbot integrated into ShotGrid (Flow Production Tracking) would make it more convenient to use the assistant, particularly for production staff.

- Right now the system is mainly built for archived data. It would be great to add functionality for active shows to scan new files into the database as they are created and remove them as they are deleted. Possibly using an integration with Perforce to detect when files are changed.